Complete Features

These features were completed when this image was assembled

Currently the Get started with on-premise host inventory quickstart gets delivered in the Core console. If we are going to keep it here we need to add the MCE or ACM operator as a prerequisite, otherwise it's very confusing.

Epic Goal

- Make it possible to disable the console operator at install time, while still having a supported+upgradeable cluster.

Why is this important?

- It's possible to disable console itself using spec.managementState in the console operator config. There is no way to remove the console operator, though. For clusters where an admin wants to completely remove console, we should give the option to disable the console operator as well.

Scenarios

- I'm an administrator who wants to minimize my OpenShift cluster footprint and who does not want the console installed on my cluster

Acceptance Criteria

- It is possible at install time to opt-out of having the console operator installed. Once the cluster comes up, the console operator is not running.

Dependencies (internal and external)

- Composable cluster installation

Previous Work (Optional):

- https://docs.google.com/document/d/1srswUYYHIbKT5PAC5ZuVos9T2rBnf7k0F1WV2zKUTrA/edit#heading=h.mduog8qznwz

- https://docs.google.com/presentation/d/1U2zYAyrNGBooGBuyQME8Xn905RvOPbVv3XFw3stddZw/edit#slide=id.g10555cc0639_0_7

Open questions::

- The console operator manages the downloads deployment as well. Do we disable the downloads deployment? Long term we want to move to CLI manager: https://github.com/openshift/enhancements/blob/6ae78842d4a87593c63274e02ac7a33cc7f296c3/enhancements/oc/cli-manager.md

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

Update the cluster-authentication-operator to not go degraded when it can’t determine the console url. This risks masking certain cases where we would want to raise an error to the admin, but the expectation is that this failure mode is rare.

Risk could be avoided by looking at ClusterVersion's enabledCapabilities to decide if missing Console was expected or not (unclear if the risk is high enough to be worth this amount of effort).

AC: Update the cluster-authentication-operator to not go degraded when console config CRD is missing and ClusterVersion config has Console in enabledCapabilities.

We need to continue to maintain specific areas within storage, this is to capture that effort and track it across releases.

Goals

- To allow OCP users and cluster admins to detect problems early and with as little interaction with Red Hat as possible.

- When Red Hat is involved, make sure we have all the information we need from the customer, i.e. in metrics / telemetry / must-gather.

- Reduce storage test flakiness so we can spot real bugs in our CI.

Requirements

| Requirement | Notes | isMvp? |

|---|---|---|

| Telemetry | No | |

| Certification | No | |

| API metrics | No | |

Out of Scope

n/a

Background, and strategic fit

With the expected scale of our customer base, we want to keep load of customer tickets / BZs low

Assumptions

Customer Considerations

Documentation Considerations

- Target audience: internal

- Updated content: none at this time.

Notes

In progress:

- CSI certification flakes a lot. We should fix it before we start testing migration.

- In progress (API server restarts...) https://bugzilla.redhat.com/show_bug.cgi?id=1865857

- Get local-storage-operator and AWS EBS CSI driver operator logs in must-gather (OLM-managed operators are not included there)

- In progress for LSO (must-gather script being included in image) https://bugzilla.redhat.com/show_bug.cgi?id=1756096

- CI flakes:

- Configurable timeouts for e2e tests

- Azure is slow and times out often

- Cinder times out formatting volumes

- AWS resize test times out

- Configurable timeouts for e2e tests

High prio:

- Env. check tool for VMware - users often mis-configure permissions there and blame OpenShift. If we had a tool they could run, it might report better errors.

- Should it be part of the installer?

- Spike exists

- Add / use cloud API call metrics

-

- Helps customers to understand why things are slow

- Helps build cop to understand a flake

- With a post-install step that filters data from Prometheus that’s still running in the CI job.

- Ideas:

- Cloud is throttling X% of API calls longer than Y seconds

- Attach / detach / provisioning / deletion / mount / unmount / resize takes longer than X seconds?

- Capture metrics of operations that are stuck and won’t finish.

- Sweep operation map from executioner???

- Report operation metric into the highest bucket after the bucket threshold (i.e. if 10minutes is the last bucket, report an operation into this bucket after 10 minutes and don’t wait for its completion)?

- Ask the monitoring team?

- Include in CSI drivers too.

- With alerts too

- Report events for cloud issues

- E.g. cloud API reports weird attach/provision error (e.g. due to outage)

- What volume plugins actually users use the most? https://issues.redhat.com/browse/STOR-324

Unsorted

- As the number of storage operators grows, it would be grafana board for storage operators

- CSI driver metrics (from CSI sidecars + the driver itself + its operator?)

- CSI migration?

- Get aggregated logs in cluster

- They're rotated too soon

- No logs from dead / restarted pods

- No tools to combine logs from multiple pods (e.g. 3 controller managers)

- What storage issues customers have? it was 22% of all issues.

- Insufficient docs?

- Probably garbage

- Document basic storage troubleshooting for our supports

- What logs are useful when, what log level to use

- This has been discussed during the GSS weekly team meeting; however, it would be beneficial to have this documented.

- Common vSphere errors, their debugging and fixing.

- Document sig-storage flake handling - not all failed [sig-storage] tests are ours

Epic Goal

- Update all images that we ship with OpenShift to the latest upstream releases and libraries.

- Exact content of what needs to be updated will be determined as new images are released upstream, which is not known at the beginning of OCP development work. We don't know what new features will be included and should be tested and documented. Especially new CSI drivers releases may bring new, currently unknown features. We expect that the amount of work will be roughly the same as in the previous releases. Of course, QE or docs can reject an update if it's too close to deadline and/or looks too big.

Traditionally we did these updates as bugfixes, because we did them after the feature freeze (FF). Trying no-feature-freeze in 4.12. We will try to do as much as we can before FF, but we're quite sure something will slip past FF as usual.

Why is this important?

- We want to ship the latest software that contains new features and bugfixes.

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

There is a new driver release 5.0.0 since the last rebase that includes snapshot support:

https://github.com/kubernetes-sigs/ibm-vpc-block-csi-driver/releases/tag/v5.0.0

Rebase the driver on v5.0.0 and update the deployments in ibm-vpc-block-csi-driver-operator.

There are no corresponding changes in ibm-vpc-node-label-updater since the last rebase.

Background and Goal

Currently in OpenShift we do not support distributing hotfix packages to cluster nodes. In time-sensitive situations, a RHEL hotfix package can be the quickest route to resolving an issue.

Acceptance Criteria

- Under guidance from Red Hat CEE, customers can deploy RHEL hotfix packages to MachineConfigPools.

- Customers can easily remove the hotfix when the underlying RHCOS image incorporates the fix.

Before we ship OCP CoreOS layering in https://issues.redhat.com/browse/MCO-165 we need to switch the format of what is currently `machine-os-content` to be the new base image.

The overall plan is:

- Publish the new base image as `rhel-coreos-8` in the release image

- Also publish the new extensions container (https://github.com/openshift/os/pull/763) as `rhel-coreos-8-extensions`

- Teach the MCO to use this without also involving layering/build controller

- Delete old `machine-os-content`

We need something in our repo /docs that we can point people to that briefly explains how to use "layering features" via the MCO in OCP ( well, and with the understanding that OKD also uses the MCO ).

Maybe this ends up in its own repo like https://github.com/coreos/coreos-layering-examples eventually, maybe it doesn't.

I'm thinking something like https://github.com/openshift/machine-config-operator/blob/layering/docs/DemoLayering.md back from when we did the layering branch, but actually matching what we have in our main branch

This is separate but probably related to what Colin started in the Docs Tracker.

Feature Overview

- Follow up work for the new provider, Nutanix, to extend extisting capabilities with new ones

Goals

- Make Nutanix CSI Driver part of the CVO once the driver and the Operator has been open sourced by the vendor

- Enable IPI for disconnected environments

- Enable the UPI workflow

- Nutanix CCM for the Node Controller

- Enable Egress IP for the provider

Requirements

- This Section:* A list of specific needs or objectives that a Feature must deliver to satisfy the Feature.. Some requirements will be flagged as MVP. If an MVP gets shifted, the feature shifts. If a non MVP requirement slips, it does not shift the feature.

| Requirement | Notes | isMvp? |

|---|---|---|

| CI - MUST be running successfully with test automation | This is a requirement for ALL features. | YES |

| Release Technical Enablement | Provide necessary release enablement details and documents. | YES |

OCP/Telco Definition of Done

Epic Template descriptions and documentation.

<--- Cut-n-Paste the entire contents of this description into your new Epic --->

Epic Goal

- Allow users to have nutanix platfrom integration choice (similar to vsphere) from AI SaaS

Why is this important?

- Expend RH offering beyond IPI

Scenarios

- ...

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- ...

Dependencies (internal and external)

- ...

Previous Work (Optional):

- …

Open questions::

- …

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

Description of the problem:

BE 2.13.0, In Nutanix, UMN flow, If machine_network = [] , bootstrap validation failed.

How reproducible:

Trying to reproduce

Steps to reproduce:

1.

2.

3.

Actual results:

Expected results:

Why?

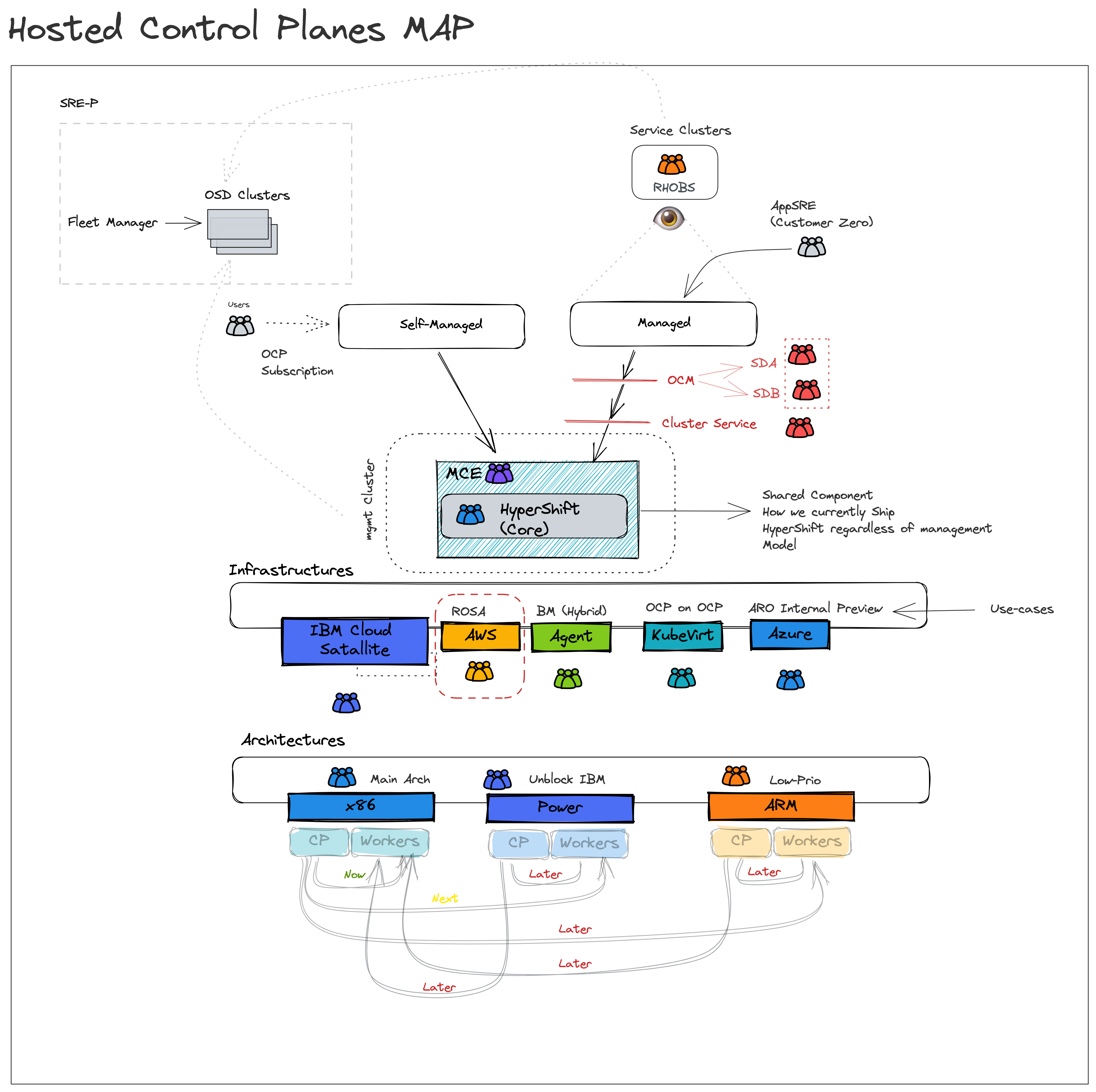

- Decouple control and data plane.

- Customers do not pay Red Hat more to run HyperShift control planes and supporting infrastructure than Standalone control planes and supporting infrastructure.

- Improve security

- Shift credentials out of cluster that support the operation of core platform vs workload

- Improve cost

- Allow a user to toggle what they don’t need.

- Ensure a smooth path to scale to 0 workers and upgrade with 0 workers.

Assumption

- A customer will be able to associate a cluster as “Infrastructure only”

- E.g. one option: management cluster has role=master, and role=infra nodes only, control planes are packed on role=infra nodes

- OR the entire cluster is labeled infrastructure , and node roles are ignored.

- Anything that runs on a master node by default in Standalone that is present in HyperShift MUST be hosted and not run on a customer worker node.

Doc: https://docs.google.com/document/d/1sXCaRt3PE0iFmq7ei0Yb1svqzY9bygR5IprjgioRkjc/edit

Epic Goal

- To improve debug-ability of ovn-k in hypershift

- To verify the stability of of ovn-k in hypershift

- To introduce a EgressIP reach-ability check that will work in hypershift

Why is this important?

- ovn-k is supposed to be GA in 4.12. We need to make sure it is stable, we know the limitations and we are able to debug it similar to the self hosted cluster.

Acceptance Criteria

- CI - MUST be running successfully with tests automated

Dependencies (internal and external)

- This will need consultation with the people working on HyperShift

Previous Work (Optional):

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

CNCC was moved to the management cluster and it should use proxy settings defined for the management cluster.

In testing dual stack on vsphere we discovered that kubelet will not allow us to specify two ips on any platform except baremetal. We have a couple of options to deal with that:

- Wait for https://github.com/kubernetes/enhancements/pull/3706 to merge and be implemented upstream. This almost certainly means we miss 4.13.

- Wait for https://github.com/kubernetes/enhancements/pull/3706 to merge and then implement the design downstream. This involves risk of divergence from the eventual upstream design. We would probably only ship this way as tech preview and provide support exceptions for major customers.

- Remove the setting of nodeip for kubelet. This should get around the limitation on providing dual IPs, but it means we're reliant on the default kubelet IP selection logic, which is...not good. We'd probably only be able to support this on single nic network configurations.

GA CgroupV2 in 4.13

Default with RHEL 9

- Day 0 support for 4.13 where customer is able to change V1(default) to V2

- Day 1 where customer is able to change v1(default) to V2

- documentation on migration

- Pinning existing clusters to V1 before upgrade to 4.13

From OCP - 4.13, the RCOS nodes by default come up with the "CGroupsV2" configuration

Command to verify on any OCP cluster node

stat -c %T -f /sys/fs/cgroup/

So, to avoid unexpected complications, if the `cgroupMode` is found to be empty in the `nodes.config` resource, `CGroupsv1` configuration needs to be explicitly set using the `machine-config-operator`

This user tracks the changes required to remove the TechPreview related checks in the MCO code to graduate the CGroupsV2 feature to GA.

Feature

As an Infrastructure Administrator, I want to deploy OpenShift on vSphere with supervisor (aka Masters) and worker nodes (from a MachineSet) across multiple vSphere data centers and multiple vSphere clusters using full stack automation (IPI) and user provided infrastructure (UPI).

MVP

Install OpenShift on vSphere using IPI / UPI in multiple vSphere data centers (regions) and multiple vSphere clusters in 1 vCenter, all in the same IPv4 subnet (in the same physical location).

- Kubernetes Region contains vSphere datacenter and (single) vCenter name

- Kubernetes Zone contains vSphere cluster, resource pool, datastore, network (port group)

Out of scope

- There are no support the conversion of a non-zonal configuration (i.e. an existing OpenShift installation without 1+ zones) to a zonal configuration (1+ zones), but zonal UPI installation by the Infrastructure Administrator is permitted.

Scenarios for consideration:

- OpenShift in vSphere across different zones to avoid single points of failure, whereby each node is in different ESX clusters within the same vSphere datacenter, but in different networks.

- OpenShift in vSphere across multiple vSphere datacenter, while ensuring workers and masters are spread across 2 different datacenter in different subnets. (

RFE-845,RFE-459).

Acceptance criteria:

- Ensure vSphere IPI can successfully be deployed with ODF across the 3 zones (vSphere clusters) within the same vCenter [like we do with AWS, GCP & Azure].

- Ensure zonal configuration in vSphere using UPI is documented and tested.

References:

Epic Goal*

We need SPLAT-594 to be reflected in our CSI driver operator to support vSphere topology of storage GA.

Why is this important? (mandatory)

See SPLAT-320.

Scenarios (mandatory)

As user, I want to edit Infrastructure object after OCP installation (or upgrade) to update cluster topology, so all newly provisioned PVs will get the new topology labels.

(With vSphere topology GA, we expect that users will be allowed to edit Infrastructure and change the cluster topology after cluster installation.)

Dependencies (internal and external) (mandatory)

- SPLAT: [vsphere] Support Multiple datacenters and clusters GA.

Our expectation is that teams would modify the list below to fit the epic. Some epics may not need all the default groups but what is included here should accurately reflect who will be involved in delivering the epic.

- Development -

- Documentation -

- QE -

- PX -

- Others -

Acceptance Criteria (optional)

Provide some (testable) examples of how we will know if we have achieved the epic goal.

Drawbacks or Risk (optional)

It's possible that Infrastructure will remain read-only. No code on Storage side is expected then.

Done - Checklist (mandatory)

- CI Testing - Basic e2e automationTests are merged and completing successfully

- Documentation - Content development is complete.

- QE - Test scenarios are written and executed successfully.

- Technical Enablement - Slides are complete (if requested by PLM)

- Engineering Stories Merged

- All associated work items with the Epic are closed

- Epic status should be “Release Pending”

In a zonal deployments, it is possible that new failure-domains are added to the cluster.

In that case, we will have to most likely discover these new failure-domains and tag datastores in them, so as topology aware provisioning can work.

When STOR-1145 is merged, make sure that these new metrics are reported via telemetry to us.

Exit criteria:

- verify that metrics are reported in telemetry? I am not sure we have capabilities to test that, all code will be in monitoring repos.

I was thinking we will probably need a detailed metric for topology information about the cluster. Such as - how many failure-domains, how many datacenter and how many datastores.

We should create a metric and an alert if both ClusterCSIDriver and Infra object specify a topology.

Although such configuration is supported and Infra object will take precedence but it indicates an user error and hence user should be alerted about them.

As an openshift engineer make changes to various openshift components so that vSphere zonal installation is considered GA.

As a openshift engineer create an additional UPI terraform for zonal so that it can be tested in CI.

As a openshift engineer I need to follow the process to move the api from tech preview to ga so it can be used by clusters not installed with TechPreviewNoUpgrade.

more to follow...

As a openshift engineer implement a new job for upi zonal so that method of installation is tested.

As a openshift engineer depreciate existing vSphere platform spec parameters so that they can eventually be removed in favor of zonal.

Feature Goal*

What is our purpose in implementing this? What new capability will be available to customers?

The goal of this feature is to provide a consistent, predictable and deterministic approach on how the default storage class(es) is managed.

Why is this important? (mandatory)

The current default storage class implementation has corner cases which can result in PVs staying in pending because there is either no default storage class OR multiple storage classes are defined

Scenarios (mandatory)

Provide details for user scenarios including actions to be performed, platform specifications, and user personas.

No default storage class

In some cases there is no default SC defined, this can happen during OCP deployment where components such as the registry request a PV whereas the SC are not been defined yet. This can also happen during a change in default SC, there won't be any between the admin unset the current one and set the new on.

- The admin marks the current default SC1 as non-default.

Another user creates PVC requesting a default SC, by leaving pvc.spec.storageClassName=nil. The default SC does not exist at this point, therefore the admission plugin leaves the PVC untouched with pvc.spec.storageClassName=nil.

The admin marks SC2 as default.

PV controller, when reconciling the PVC, updates pvc.spec.storageClassName=nil to the new SC2.

PV controller uses the new SC2 when binding / provisioning the PVC.

- The installer creates PVC for the image registry first, requesting the default storage class by leaving pvc.spec.storageClassName=nil.

The installer creates a default SC.

PV controller, when reconciling the PVC, updates pvc.spec.storageClassName=nil to the new default SC.

PV controller uses the new default SC when binding / provisioning the PVC.

Multiple Storage Classes

In some cases there are multiple default SC, this can be an admin mistake (forget to unset the old one) or during the period where a new default SC is created but the old one is still present.

New behavior:

- Create a default storage class A

- Create a default storage class B

- Create PVC with pvc.spec.storageCLassName = nil

-> the PVC will get the default storage class with the newest CreationTimestamp (i.e. B) and no error should show.

-> admin will get an alert that there are multiple default storage classes and they should do something about it.

CSI that are shipped as part of OCP

The CSI drivers we ship as part of OCP are deployed and managed by RH operators. These operators automatically create a default storage class. Some customers don't like this approach and prefer to:

- Create their own default storage class

- Have no default storage class in order to disable dynamic provisioning

Dependencies (internal and external) (mandatory)

What items must be delivered by other teams/groups to enable delivery of this epic.

No external dependencies.

Contributing Teams(and contacts) (mandatory)

Our expectation is that teams would modify the list below to fit the epic. Some epics may not need all the default groups but what is included here should accurately reflect who will be involved in delivering the epic.

- Development - STOR

- Documentation - STOR

- QE - STOR

- PX -

- Others -

Acceptance Criteria (optional)

Provide some (testable) examples of how we will know if we have achieved the epic goal.

Drawbacks or Risk (optional)

Can bring confusion to customer as there is a change in the default behavior customer are used to. This needs to be carefully documented.

Done - Checklist (mandatory)

The following points apply to all epics and are what the OpenShift team believes are the minimum set of criteria that epics should meet for us to consider them potentially shippable. We request that epic owners modify this list to reflect the work to be completed in order to produce something that is potentially shippable.

- CI Testing - Basic e2e automationTests are merged and completing successfully

- Documentation - Content development is complete.

- QE - Test scenarios are written and executed successfully.

- Technical Enablement - Slides are complete (if requested by PLM)

- Engineering Stories Merged

- All associated work items with the Epic are closed

- Epic status should be “Release Pending”

- https://github.com/openshift/alibaba-disk-csi-driver-operator/pull/41

- https://github.com/openshift/aws-ebs-csi-driver-operator/pull/173

- https://github.com/openshift/azure-disk-csi-driver-operator/pull/63

- https://github.com/openshift/azure-file-csi-driver-operator/pull/42

- https://github.com/openshift/gcp-pd-csi-driver-operator/pull/58

- https://github.com/openshift/ibm-vpc-block-csi-driver-operator/pull/48

- https://github.com/openshift/openstack-cinder-csi-driver-operator/pull/103

- https://github.com/openshift/ovirt-csi-driver-operator/pull/111

- https://github.com/openshift/vmware-vsphere-csi-driver-operator/pull/126

OC mirror is GA product as of Openshift 4.11 .

The goal of this feature is to solve any future customer request for new features or capabilities in OC mirror

In 4.12 release, a new feature was introduced to oc-mirror allowing it to use OCI FBC catalogs as starting point for mirroring operators.

Overview

As a oc-mirror user, I would like the OCI FBC feature to be stable

so that I can use it in a production ready environment

and to make the new feature and all existing features of oc-mirror seamless

Current Status

This feature is ring-fenced in the oc mirror repository, it uses the following flags to achieve this so as not to cause any breaking changes in the current oc-mirror functionality.

- --use-oci-feature

- --oci-feature-action (copy or mirror)

- --oci-registries-config

The OCI FBC (file base container) format has been delivered for Tech Preview in 4.12

Tech Enablement slides can be found here https://docs.google.com/presentation/d/1jossypQureBHGUyD-dezHM4JQoTWPYwiVCM3NlANxn0/edit#slide=id.g175a240206d_0_7

Design doc is in https://docs.google.com/document/d/1-TESqErOjxxWVPCbhQUfnT3XezG2898fEREuhGena5Q/edit#heading=h.r57m6kfc2cwt (also contains latest design discussions around the stories of this epic)

Link to previous working epic https://issues.redhat.com/browse/CFE-538

Contacts for the OCI FBC feature

- Sherine Khoury

- Luigi Mario Zuccarelli

- IBM John Hunkins

- CFE PM Heather Heffner

- WRKLDS PM Tomas Smetana

As IBM user, I'd like to be able to specify the destination of the OCI FBC catalog in ImageSetConfig

So that I can control where that image is pushed to on the disconnected destination registry, because the path on disk to that OCI catalog doesn't make sense to be used in the component paths of the destination catalog.

Expected Inputs and Outputs - Counter Proposal

Examples provided assume that the current working directory is set to /tmp/cwdtest.

Instead of introducing a targetNamespace which is used in combination with targetName, this counter proposal introduces a targetCatalog field which supersedes the existing targetName field (which would be marked as deprecated). Users should transition from using targetName to targetCatalog, but if both happen to be specified, the targetCatalog is preferred and targetName is ignored. Any ISC that currently uses targetName alone should continue to be used as currently defined.

The rationale for targetCatalog is that some customers will have restrictions on where images can be placed. All IBM images always use a namespace. We therefore need a way to indicate where the CATALOG image is located within the context of the target registry... it can't just be placed in the root, so we need a way to configure this.

The targetCatalog field consists of an optional namespace followed by the target image name, described in extended Backus–Naur form below:

target-catalog = [namespace '/'] target-name target-name = path-component namespace = path-component ['/' path-component]* path-component = alpha-numeric [separator alpha-numeric]* alpha-numeric = /[a-z0-9]+/ separator = /[_.]|__|[-]*/

The target-name portion of targetCatalog represents the the image name in the final destination registry, and matches the definition/purpose of the targetName field. The namespace is only used for "placement" of the catalog image into the right "hierarchy" in the target registry. The target-name portion will be used in the catalog source metadata name, the file name of the catalog source, and target image reference.

Examples:

- with namespace:

targetCatalog: foo/bar/baz/ibm-zcon-zosconnect-example

- without namespace:

targetCatalog: ibm-zcon-zosconnect-example

Simple Flow

FBC image from docker registry

Command:

oc mirror -c /Users/jhunkins/go/src/github.com/jchunkins/oc-mirror/ImageSetConfiguration.yml --dest-skip-tls --dest-use-http docker://localhost:5000

ISC

/Users/jhunkins/go/src/github.com/jchunkins/oc-mirror/ImageSetConfiguration.yml

kind: ImageSetConfiguration apiVersion: mirror.openshift.io/v1alpha2 storageConfig: local: path: /tmp/localstorage mirror: operators: - catalog: icr.io/cpopen/ibm-zcon-zosconnect-catalog@sha256:6f02ecef46020bcd21bdd24a01f435023d5fc3943972ef0d9769d5276e178e76

ICSP

/tmp/cwdtest/oc-mirror-workspace/results-1675716807/imageContentSourcePolicy.yaml

apiVersion: operator.openshift.io/v1alpha1 kind: ImageContentSourcePolicy metadata: labels: operators.openshift.org/catalog: "true" name: operator-0 spec: repositoryDigestMirrors: - mirrors: - localhost:5000/cpopen source: icr.io/cpopen - mirrors: - localhost:5000/openshift4 source: registry.redhat.io/openshift4

CatalogSource

/tmp/cwdtest/oc-mirror-workspace/results-1675716807/catalogSource-ibm-zcon-zosconnect-catalog.yaml

apiVersion: operators.coreos.com/v1alpha1 kind: CatalogSource metadata: name: ibm-zcon-zosconnect-catalog namespace: openshift-marketplace spec: image: localhost:5000/cpopen/ibm-zcon-zosconnect-catalog:6f02ec sourceType: grpc

Simple Flow With Target Namespace

FBC image from docker registry (putting images into a destination "namespace")

Command:

oc mirror -c /Users/jhunkins/go/src/github.com/jchunkins/oc-mirror/ImageSetConfiguration.yml --dest-skip-tls --dest-use-http docker://localhost:5000/foo

ISC

/Users/jhunkins/go/src/github.com/jchunkins/oc-mirror/ImageSetConfiguration.yml

kind: ImageSetConfiguration apiVersion: mirror.openshift.io/v1alpha2 storageConfig: local: path: /tmp/localstorage mirror: operators: - catalog: icr.io/cpopen/ibm-zcon-zosconnect-catalog@sha256:6f02ecef46020bcd21bdd24a01f435023d5fc3943972ef0d9769d5276e178e76

ICSP

/tmp/cwdtest/oc-mirror-workspace/results-1675716807/imageContentSourcePolicy.yaml

apiVersion: operator.openshift.io/v1alpha1 kind: ImageContentSourcePolicy metadata: labels: operators.openshift.org/catalog: "true" name: operator-0 spec: repositoryDigestMirrors: - mirrors: - localhost:5000/foo/cpopen source: icr.io/cpopen - mirrors: - localhost:5000/foo/openshift4 source: registry.redhat.io/openshift4

CatalogSource

/tmp/cwdtest/oc-mirror-workspace/results-1675716807/catalogSource-ibm-zcon-zosconnect-catalog.yaml

apiVersion: operators.coreos.com/v1alpha1 kind: CatalogSource metadata: name: ibm-zcon-zosconnect-catalog namespace: openshift-marketplace spec: image: localhost:5000/foo/cpopen/ibm-zcon-zosconnect-catalog:6f02ec sourceType: grpc

Simple Flow With TargetCatalog / TargetTag

FBC image from docker registry (overriding the catalog name and tag)

Command:

oc mirror -c /Users/jhunkins/go/src/github.com/jchunkins/oc-mirror/ImageSetConfiguration.yml --dest-skip-tls --dest-use-http docker://localhost:5000

ISC

/Users/jhunkins/go/src/github.com/jchunkins/oc-mirror/ImageSetConfiguration.yml

kind: ImageSetConfiguration apiVersion: mirror.openshift.io/v1alpha2 storageConfig: local: path: /tmp/localstorage mirror: operators: - catalog: icr.io/cpopen/ibm-zcon-zosconnect-catalog@sha256:6f02ecef46020bcd21bdd24a01f435023d5fc3943972ef0d9769d5276e178e76 targetCatalog: cpopen/ibm-zcon-zosconnect-example # NOTE: namespace now has to be provided along with the # target catalog name to preserve the namespace in the resulting image targetTag: v123

ICSP

/tmp/cwdtest/oc-mirror-workspace/results-1675716807/imageContentSourcePolicy.yaml

apiVersion: operator.openshift.io/v1alpha1 kind: ImageContentSourcePolicy metadata: labels: operators.openshift.org/catalog: "true" name: operator-0 spec: repositoryDigestMirrors: - mirrors: - localhost:5000/cpopen source: icr.io/cpopen - mirrors: - localhost:5000/openshift4 source: registry.redhat.io/openshift4

CatalogSource

/tmp/cwdtest/oc-mirror-workspace/results-1675716807/catalogSource-ibm-zcon-zosconnect-example.yaml

apiVersion: operators.coreos.com/v1alpha1 kind: CatalogSource metadata: name: ibm-zcon-zosconnect-example namespace: openshift-marketplace spec: image: localhost:5000/cpopen/ibm-zcon-zosconnect-example:v123 sourceType: grpc

OCI Flow

FBC image from OCI path

In this example we're suggesting the use of a targetCatalog field.

Command:

oc mirror -c /Users/jhunkins/go/src/github.com/jchunkins/oc-mirror/ImageSetConfiguration.yml --dest-skip-tls --dest-use-http --use-oci-feature docker://localhost:5000

ISC

/Users/jhunkins/go/src/github.com/jchunkins/oc-mirror/ImageSetConfiguration.yml

kind: ImageSetConfiguration apiVersion: mirror.openshift.io/v1alpha2 storageConfig: local: path: /tmp/localstorage mirror: operators: - catalog: oci:///foo/bar/baz/ibm-zcon-zosconnect-catalog/amd64 # This is just a path to the catalog and has no special meaning targetCatalog: foo/bar/baz/ibm-zcon-zosconnect-example # <--- REQUIRED when using OCI and optional for docker images # value is used within the context of the target registry # targetTag: v123 # <--- OPTIONAL

ICSP

/tmp/cwdtest/oc-mirror-workspace/results-1675716807/imageContentSourcePolicy.yaml

apiVersion: operator.openshift.io/v1alpha1 kind: ImageContentSourcePolicy metadata: labels: operators.openshift.org/catalog: "true" name: operator-0 spec: repositoryDigestMirrors: - mirrors: - localhost:5000/cpopen source: icr.io/cpopen - mirrors: - localhost:5000/openshift4 source: registry.redhat.io/openshift4

CatalogSource

/tmp/cwdtest/oc-mirror-workspace/results-1675716807/catalogSource-ibm-zcon-zosconnect-example.yaml

apiVersion: operators.coreos.com/v1alpha1 kind: CatalogSource metadata: name: ibm-zcon-zosconnect-example namespace: openshift-marketplace spec: image: localhost:5000/foo/bar/baz/ibm-zcon-zosconnect-example:6f02ec # Example uses "targetCatalog" set to # "foo/bar/baz/ibm-zcon-zosconnect-example" at the # destination registry localhost:5000 sourceType: grpc

OCI Flow With Namespace

FBC image from OCI path (putting images into a destination "namespace" named "abc")

Command:

oc mirror -c /Users/jhunkins/go/src/github.com/jchunkins/oc-mirror/ImageSetConfiguration.yml --dest-skip-tls --dest-use-http --use-oci-feature docker://localhost:5000/abc

ISC

/Users/jhunkins/go/src/github.com/jchunkins/oc-mirror/ImageSetConfiguration.yml

kind: ImageSetConfiguration apiVersion: mirror.openshift.io/v1alpha2 storageConfig: local: path: /tmp/localstorage mirror: operators: - catalog: oci:///foo/bar/baz/ibm-zcon-zosconnect-catalog/amd64 # This is just a path to the catalog and has no special meaning targetCatalog: foo/bar/baz/ibm-zcon-zosconnect-example # <--- REQUIRED when using OCI and optional for docker images # value is used within the context of the target registry # targetTag: v123 # <--- OPTIONAL

ICSP

/tmp/cwdtest/oc-mirror-workspace/results-1675716807/imageContentSourcePolicy.yaml

apiVersion: operator.openshift.io/v1alpha1 kind: ImageContentSourcePolicy metadata: labels: operators.openshift.org/catalog: "true" name: operator-0 spec: repositoryDigestMirrors: - mirrors: - localhost:5000/abc/cpopen source: icr.io/cpopen - mirrors: - localhost:5000/abc/openshift4 source: registry.redhat.io/openshift4

CatalogSource

/tmp/cwdtest/oc-mirror-workspace/results-1675716807/catalogSource-ibm-zcon-zosconnect-example-catalog.yaml

apiVersion: operators.coreos.com/v1alpha1 kind: CatalogSource metadata: name: ibm-zcon-zosconnect-example namespace: openshift-marketplace spec: image: localhost:5000/abc/foo/bar/baz/ibm-zcon-zosconnect-example:6f02ec # Example uses "targetCatalog" set to # "foo/bar/baz/ibm-zcon-zosconnect-example" at the # destination registry localhost:5000/abc sourceType: grpc

WHAT

Refer engineering notes document https://docs.google.com/document/d/1zZ6FVtgmruAeBoUwt4t_FoZH2KEm46fPitUB23ifboY/edit#heading=h.6pw5r5w2r82 steps 2-7

Acceptance Criteria

- Code clean up and formating into functions

- Ensure good commenting

- Implement correct code functionality

- Ensure to oci mirrorTomirror functionality works correctly

- Update unit tests

As IBM, I would like to use oc-mirror with the --use-oci-feature flag and ImageSetConfigs containing OCI-FBC operator catalogs to mirror these catalogs to a connected registry

so that , regarding OCI FBC catalog:

- all bundles specified in the ImageSetConfig and their related images are mirrored from their source registry to the destination registry

- and the catalogs are mirrored from the local disk to the destination registry

- and the ImageContentSourcePolicy and CatalogSource files are generated correctly

and that regarding releases, additional images, helm charts:

- The images that are selected for mirroring are mirrored to the destination registry using the MirrorToMirror workflow

As an oc-mirror user I want a well documented and intuitive process

so that I can effectively and efficiently deliver image artifacts in both connected and disconnected installs with no impact on my current workflow

Glossary:

- OCI-FBC operator catalog: catalog image in oci format saved to disk, referenced with oci://path-to-image

- registry based operator catalog: catalog image hosted on a container registry.

References:

Acceptance criteria:

- No regression on oc-mirror use cases that are not using OCI-FBC feature

- mirrorToMirror use case with oci feature flag should be successful when all operator catalogs in ImageSetConfig are OCI-FBC:

- oc-mirror -c config.yaml docker://remote-registry --use-oci-feature succeeds

- All release images, helm charts, additional images are mirrored to the remote-registry in an incremental manner (only new images are mirrored based on contents of the storageConfig)

- All catalogs OCI-FBC, selected bundles and their related images are mirrored to the remote-registry and corresponding catalogSource and ImageSourceContentPolicy generated

- All registry based catalogs, selected bundles and their related images are mirrored to the remote-registry and corresponding catalogSource and ImageSourceContentPolicy generated

- mirrorToDisk use case with the oci feature flag is forbidden. The following command should fail:

- oc-mirror --from=seq_xx_tar docker://remote-registry --use-oci-feature

- diskToMirror use case with oci feature flag is forbidden. The following command should fail:

- oc-mirror --config=isc.yaml file://file-dir --use-oci-feature

Feature Overview

- Support OpenShift to be deployed on AWS Local Zones

Goals

- Support OpenShift to be deployed from day-0 on AWS Local Zones

- Support an existing OpenShift cluster to deploy compute Nodes on AWS Local Zones (day-2)

AWS Local Zones support - feature delivered in phases:

- Phase 0 (

OCPPLAN-9630): Document how to create compute nodes on AWS Local Zones in day-0 (SPLAT-635) - Phase 1 (

OCPBU-2): Create edge compute pool to generate MachineSets for node with NoSchedule taints when installing a cluster in existing VPC with AWS Local Zone subnets (SPLAT-636) - Phase 2 (

OCPBU-351): Installer automates network resources creation on Local Zone based on the edge compute pool (SPLAT-657)

Requirements

- This Section:* A list of specific needs or objectives that a Feature must deliver to satisfy the Feature.. Some requirements will be flagged as MVP. If an MVP gets shifted, the feature shifts. If a non MVP requirement slips, it does not shift the feature.

| Requirement | Notes | isMvp? |

|---|---|---|

| CI - MUST be running successfully with test automation | This is a requirement for ALL features. | YES |

| Release Technical Enablement | Provide necessary release enablement details and documents. | YES |

Epic Goal

- Admins can create compute pool named `edge` on the AWS platform to setting up Local Zone MachineSets.

- Admins can select and configure subnets on Local Zones before cluster creation.

- Ensure the installer allows creating a new machine pool for `edge` workloads

- Ensure the installer can create the MachineSet with `NoSchedule` taints on edge machine pools.

- Ensure Local Zone subnets will not be used on `worker` compute pools or control planes.

- Ensure the Wavelength zone will not be used in any compute pool

- Ensure the Cluster Network MTU manifest is created when Local Zone subnets are added when installing a cluster in existing VPC

Why is this important?

- …

Scenarios

User Stories

- As a cluster admin, I want the ability to specify a set of subnets on the AWS

Local Zone locations to deploy worker nodes, so I can further create custom

applications to deliver low latency to my end users.

- As a cluster admin, I would like to create a cluster extending worker nodes to

the edge of the AWS cloud provider with Local Zones, so I can further create

custom applications to deliver low latency to my end users.

- As a cluster admin, I would like to select existing subnets from the local and

the parent region zones, to install a cluster, so I can manage networks with

my automation.

- As a cluster admin, I would like to install OpenShift clusters, extending the

compute nodes to the Local Zones in my day zero operations without needing to

set up the network and compute dependencies, so I can speed up the edge adoption

in my organization using OKD/OCP.

Acceptance Criteria

- The enhancement must be accepted and merged

- Release Technical Enablement - Provide necessary release enablement details and documents.

- The installer implementation must be merged

Dependencies (internal and external)

Previous Work (Optional):

Open questions::

- …

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

Feature Overview

- Support deploying OCP in “GCP Service Project” while networks are defined in “GCP Host Project”.

- Enable OpenShift IPI Installer to deploy OCP in “GCP Service Project” while networks are defined in “GCP Host Project”

- “GCP Service Project” is from where the OpenShift installer is fired.

- “GCP host project” is the target project where the deployment of the OCP machines are done.

- Customer using shared VPC and have a distributed network spanning across the projects.

Goals

- As a user, I want to be able to deploy OpenShift on Google Cloud using XPN, where networks and other resources are deployed in a shared "Host Project" while the user bootstrap the installation from a "Sevice Project" so that I can follow Google's architecture best practices

Requirements

- This Section:* A list of specific needs or objectives that a Feature must deliver to satisfy the Feature.. Some requirements will be flagged as MVP. If an MVP gets shifted, the feature shifts. If a non MVP requirement slips, it does not shift the feature.

| Requirement | Notes | isMvp? |

|---|---|---|

| CI - MUST be running successfully with test automation | This is a requirement for ALL features. | YES |

| Release Technical Enablement | Provide necessary release enablement details and documents. | YES |

Documentation Considerations

Questions to be addressed:

- What educational or reference material (docs) is required to support this product feature? For users/admins? Other functions (security officers, etc)?

- Does this feature have doc impact?

- New Content, Updates to existing content, Release Note, or No Doc Impact

- If unsure and no Technical Writer is available, please contact Content Strategy.

- What concepts do customers need to understand to be successful in [action]?

- How do we expect customers will use the feature? For what purpose(s)?

- What reference material might a customer want/need to complete [action]?

- Is there source material that can be used as reference for the Technical Writer in writing the content? If yes, please link if available.

- What is the doc impact (New Content, Updates to existing content, or Release Note)?

Epic Goal

- Enable OpenShift IPI Installer to deploy OCP to a shared VPC in GCP.

- The host project is where the VPC and subnets are defined. Those networks are shared to one or more service projects.

- Objects created by the installer are created in the service project where possible. Firewall rules may be the only exception.

- Documentation outlines the needed minimal IAM for both the host and service project.

Why is this important?

- Shared VPC's are a feature of GCP to enable granular separation of duties for organizations that centrally manage networking but delegate other functions and separation of billing. This is used more often in larger organizations where separate teams manage subsets of the cloud infrastructure. Enterprises that use this model would also like to create IPI clusters so that they can leverage the features of IPI. Currently organizations that use Shared VPC's must use UPI and implement the features of IPI themselves. This is repetative engineering of little value to the customer and an increased risk of drift from upstream IPI over time. As new features are built into IPI, organizations must become aware of those changes and implement them themselves instead of getting them "for free" during upgrades.

Scenarios

- Deploy cluster(s) into service project(s) on network(s) shared from a host project.

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- ...

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

User Story:

As a developer, I want to be able to:

- specify a project for the public and private DNS managedZones

so that I can achieve

- enable DNS zones in alternate projects, such as the GCP XPN Host Project

Acceptance Criteria:

Description of criteria:

- cluster-ingress-operator can parse the project and zone name from the following format

- projects/project-id/managedZones/zoneid

- cluster-ingress-operator continues to accept names that are not relative resource names

- zoneid

(optional) Out of Scope:

All modifications to the openshift-installer is handled in other cards in the epic.

Engineering Details:

- https://github.com/openshift/api/blob/0ee1471bcabbfefade29abeae5aab53366d0493f/config/v1/types_dns.go#L43

- https://github.com/openshift/cluster-ingress-operator/blob/14f40c789e4e851aae2d812ea8e6fc1e70d9977f/pkg/dns/gcp/provider.go#L51-L60 oller.go#L562-L571

- https://cloud.google.com/dns/docs/reference/v1/managedZones/get#parameters

- https://cloud.google.com/apis/design/resource_names#relative_resource_name

Feature Overview

Allow users to interactively adjust the network configuration for a host after booting the agent ISO.

Goals

Configure network after host boots

The user has Static IPs, VLANs, and/or bonds to configure, but has no idea of the device names of the NICs. They don't enter any network config in agent-config.yaml. Instead they configure each host's network via the text console after it boots into the image.

Epic Goal

- Allow users to interactively adjust the network configuration for a host after booting the agent ISO, before starting processes that pull container images.

Why is this important?

- Configuring the network prior to booting a host is difficult and error-prone. Not only is the nmstate syntax fairly arcane, but the advent of 'predictable' interface names means that interfaces retain the same name across reboots but it is nearly impossible to predict what they will be. Applying configuration to the correct hosts requires correct knowledge and input of MAC addresses. All of these present opportunities for things to go wrong, and when they do the user is forced to return to the beginning of the process and generate a new ISO, then boot all of the hosts in the cluster with it again.

Scenarios

- The user has Static IPs, VLANs, and/or bonds to configure, but has no idea of the device names of the NICs. They don't enter any network config in agent-config.yaml. Instead they configure each host's network via the text console after it boots into the image.

- The user has Static IPs, VLANs, and/or bonds to configure, but makes an error entering the configuration in agent-config.yaml so that (at least) one host will not be able to pull container images from the release payload. They correct the configuration for that host via the text console before proceeding with the installation.

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- ...

Dependencies (internal and external)

- ...

Previous Work (Optional):

- …

Open questions::

- …

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

In the console service from AGENT-453, check whether we are able to pull the release image, and display this information to the user before prompting to run nmtui.

If we can access the image, then exit the service if there is no user input after some timeout, to allow the installation to proceed in the automation flow.

Enhance the openshift-install agent create image command so that the agent-nmtui executable will be embedded in the agent ISO

After having created the agent ISO, the agent-nmtui must be added to the ISO using the following approach:

1. Unpack the agent ISO in a temporary folder

2. Unpack the /images/ignition.img compressed cpio archive in a temporary folder

3. Create a new ignition.img compressed cpio archive by appending the agent-nmtui

2. Create a new agent ISO with the updated ignition.img

Implementation note

Portions of code from a PoC located at https://github.com/andfasano/gasoline could be re-used

When running the openshift-install agent create image command, first of all it needs to extract the agent-tui executable from the release payload in a temporary folder

When the agent-tui is shown during the initial host boot, if the pull release image check fails then an additional checks box is shown along with a details text view.

The content of the details view gets continuosly updated with the details of failed check, but the user cannot move the focus over the details box (using the arrow/tab keys), thus cannot scroll its content (using the up/down arrow keys)

Create a systemd service that runs at startup prior to the login prompt and takes over the console. This should start after the network-online target, and block the login prompt appearing until it exits.

This should also block, at least temporarily, any services that require pulling an image from the registry (i.e. agent + assisted-service).

Right now all the connectivity checks are executed simultaneously, and it doesn't seem necessary especially in the positive scenario, ie when the release image can be pulled without any issue.

So, the connectivity related checks should be performed only when the release image is not accessible, to provide further infos to to the user.

The initial condition for allowing to continue (or not) the installation should be related then just to result of the primary check (right now, just the pull image) and not the secondary ones (http/dns/ping), that are just informative checks.

Note: this approach will also help to manage those cases where, currently, the release image can be pulled but the host doesn't answer to the ping

The openshift-install agent create image will need to fetch the agent-tui executable so that it could be embedded within the agent ISO. For this reason the agent-tui must be available in the release payload, so that it could be retrieved even when the command is invoked in a disconnected environment.

Currently the agent-tui displays always the additional checks (nslookup/ping/http get), even when the primary check (pull image) passes. This may cause some confusion to the user, due the fact that the additional checks do not prevent the agent-tui to complete successfully but they are just informative, to allow a better troubleshooting of the issue (so not needed in the positive case).

The additional checks should then be shown only when the primary check fails for any reason.

As a user, I need information about common misconfigurations that may be preventing the automated installation from proceeding.

If we are unable to access the release image from the registry, provide sufficient debugging information to the user to pinpoint the problem. Check for:

- DNS

- ping

- HTTP

- Registry login

- Release image

The node zero ip is currently hard-coded inside set-node-zero.sh.template and in the ServiceBaseURL template string.

ServiceBaseURL is also hard-coded inside:

- apply-host-config.service.template

- create-cluster-and-infraenv-service.template

- common.sh.template

- start-agent.sh.template

- start-cluster-installation.sh.template

- assisted-service.env.template

We need to remove this hard-coding and to allow a user to be able to set the node zero ip through the tui and have it be reflected by the agent services and scripts.

OCP/Telco Definition of Done

Epic Template descriptions and documentation.

Epic Goal

- The goal of this epic to begin the process of expanding support of OpenShift on ppc64le hardware to include IPI deployments against the IBM Power Virtual Server (PowerVS) APIs.

Why is this important?

The goal of this initiative to help boost adoption of OpenShift on ppc64le. This can be further broken down into several key objectives.

- For IBM, furthering adopt of OpenShift will continue to drive adoption on their power hardware. In parallel, this can be used for existing customers to migrate their old power on-prem workloads to a cloud environment.

- For the Multi-Arch team, this represents our first opportunity to develop an IPI offering on one of the IBM platforms. Right now, we depend on IPI on libvirt to cover our CI needs; however, this is not a supported platform for customers. PowerVS would address this caveat for ppc64le.

- By bringing in PowerVS, we can provide customers with the easiest possible experience to deploy and test workloads on IBM architectures.

- Customers already have UPI methods to solve their OpenShift on prem needs for ppc64le. This gives them an opportunity for a cloud based option, further our hybrid-cloud story.

Scenarios

- As a user with a valid PowerVS account, I would like to provide those credentials to the OpenShift installer and get a full cluster up on IPI.

Technical Specifications

Some of the technical specifications have been laid out in MULTIARCH-75.

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

Dependencies (internal and external)

- Images are built in the RHCOS pipeline and pushed in the OVA format to the IBM Cloud.

- Installer is extended to support PowerVS as a new platform.

- Machine and cluster APIs are updated to support PowerVS.

- A terraform provider is developed against the PowerVS APIs.

- A load balancing strategy is determined and made available.

- Networking details are sorted out.

Open questions::

- Load balancing implementation?

- Networking strategy given the lack of virtual network APIs in PowerVS.

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

Epic Goal

- Improve IPI on Power VS in the 4.13 cycle

Running doc to describe terminologies and concepts which are specific to Power VS - https://docs.google.com/document/d/1Kgezv21VsixDyYcbfvxZxKNwszRK6GYKBiTTpEUubqw/edit?usp=sharing

Recently, the image registry team decided with this change[1] that major cloud platforms cannot have `emptyDir` as the storage backend. IBMCloud uses ibmcos, which we would ideally need to do. There have been few issues identified with using ibmcos as is in the cluster image registry operator and some solutions identified here[2]. Basically, we would need the PowerVS platform to be supported for ibmcos and an API related to change to add resourceGroup in the infra API. This only affects 4.13 and is not an issue for 4.12.

[1] https://github.com/openshift/cluster-image-registry-operator/pull/820

BU Priority Overview

As our customers create more and more clusters, it will become vital for us to help them support their fleet of clusters. Currently, our users have to use a different interface(ACM UI) in order to manage their fleet of clusters. Our goal is to provide our users with a single interface for managing a fleet of clusters to deep diving into a single cluster. This means going to a single URL – your Hub – to interact with your OCP fleet.

Goals

The goal of this tech preview update is to improve the experience from the last round of tech preview. The following items will be improved:

- Improved Cluster Picker: Moved to Masthead for better usability, filter/search

- Support for Metrics: Metrics are now visualized from Spoke Clusters

- Avoid UI Mismatch: Dynamic Plugins from Spoke Clusters are disabled

- Console URLs Enhanced: Cluster Name Add to URL for Quick Links

- Security Improvements: Backend Proxy and Auth updates

An epic we can duplicate for each release to ensure we have a place to catch things we ought to be doing regularly but can tend to fall by the wayside.

As a developer I want a github pr template that allows me to provide:

- functionality explanation

- assignee

- screenshots or demo

- draft test cases

Key Objective

Providing our customers with a single simplified User Experience(Hybrid Cloud Console)that is extensible, can run locally or in the cloud, and is capable of managing the fleet to deep diving into a single cluster.

Why customers want this?

- Single interface to accomplish their tasks

- Consistent UX and patterns

- Easily accessible: One URL, one set of credentials

Why we want this?

- Shared code - improve the velocity of both teams and most importantly ensure consistency of the experience at the code level

- Pre-built PF4 components

- Accessibility & i18n

- Remove barriers for enabling ACM

Phase 2 Goal: Productization of the united Console

- Enable user to quickly change context from fleet view to single cluster view

- Add Cluster selector with “All Cluster” Option. “All Cluster” = ACM

- Shared SSO across the fleet

- Hub OCP Console can connect to remote clusters API

- When ACM Installed the user starts from the fleet overview aka “All Clusters”

- Share UX between views

- ACM Search —> resource list across fleet -> resource details that are consistent with single cluster details view

- Add Cluster List to OCP —> Create Cluster

Installed operators, operator details, operand details, and operand create pages should work as expected in a multicluster environment when copied CSVs are disabled on any cluster in the fleet.

AC:

- Console backend consumes "copiedCSVsDisabled" flags for each cluster in the fleet

- Frontend handles copiedCSVsDisabled behavior "per-cluster" and OLM pages work as expected no matter which cluster is selected

In order for hub cluster console OLM screens to behave as expected in a multicluster environment, we need to gather "copiedCSVsDisabled" flags from managed clusters so that the console backend/frontend can consume this information.

AC:

- The console operator syncs "copiedCSVsDisabled" flags from managed clusters into the hub cluster managed cluster config.

Mock a multicluster environment in our CI using Cypress, without provisioning multiple clusters using a combination of cy.intercept and updating window.SERVER flags in the before section of the test scenarios.

Acceptance Criteria:

Without provisioning additional clusters:

- mock server flags to render a cluster dropdown

- mock sample pod data for a fictional cluster

Description of problem:

When viewing a resource that exists for multiple clusters, the data may be from the wrong cluster for a short time after switching clusters using the multicluster switcher.

Version-Release number of selected component (if applicable):

4.10.6

How reproducible:

Always

Steps to Reproduce:

1. Install RHACM 2.5 on OCP 4.10 and enable the FeatureGate to get multicluster switching 2. From the local-cluster perspective, view a resource that would exist on all clusters, like /k8s/cluster/config.openshift.io~v1~Infrastructure/cluster/yaml 3. Switch to a different cluster in the cluster switcher

Actual results:

Content for resource may start out correct, but then switch back to the local-cluster version before switching to the correct cluster several moments later.

Expected results:

Content should always be shown from the selected cluster.

Additional info:

Migrated from bugzilla: https://bugzilla.redhat.com/show_bug.cgi?id=2075657

Description of problem:

When multi-cluster is enabled and the console can display data from other clusters, we should either change or disable how we filter the OperatorHub catalog by arch / OS. We assume that the arch and OS of the pod running the console is the same as the cluster, but for managed clusters, it could be something else, which would cause us to incorrectly filter operators.

Version-Release number of selected component (if applicable):

How reproducible:

Steps to Reproduce:

1. 2. 3.

Actual results:

Expected results:

Additional info:

Migrated from https://bugzilla.redhat.com/show_bug.cgi?id=2089939

Description of problem:

There is a possible race condition in the console operator where the managed cluster config gets updated after the console deployment and doesn't trigger a rollout.

Version-Release number of selected component (if applicable):

4.10

How reproducible:

Rarely

Steps to Reproduce:

1. Enable multicluster tech preview by adding TechPreviewNoUpgrade featureSet to FeatureGate config. (NOTE THIS ACTION IS IRREVERSIBLE AND WILL MAKE THE CLUSTER UNUPGRADEABLE AND UNSUPPORTED) 2. Install ACM 2.5+ 3. Import a managed cluster using either the ACM console or the CLI 4. Once that managed cluster is showing in the cluster dropdown, import a second managed cluster

Actual results:

Sometimes the second managed cluster will never show up in the cluster dropdown

Expected results:

The second managed cluster eventually shows up in the cluster dropdown after a page refresh

Additional info:

Migrated from bugzilla: https://bugzilla.redhat.com/show_bug.cgi?id=2055415

As a Dynamic Plugin developer I would render version of my Dynamic plugin in the About modal. For that we would need to check the `

LoadedDynamicPluginInfo` instances. There we need to check the `metadata.name` and `metadata.version` that we need to surface to the About modal.

AC: Render name and version for each Dynamic Plugin into the About modal.

Original description: When ACM moved to the unified console experience, we lost the ability in our standalone console to display our version information in our own About modal. We would like to be able to add our product and version information into the OCP About modal.

Feature Overview

Allow to configure compute and control plane nodes on across multiple subnets for on-premise IPI deployments. With separating nodes in subnets, also allow using an external load balancer, instead of the built-in (keepalived/haproxy) that the IPI workflow installs, so that the customer can configure their own load balancer with the ingress and API VIPs pointing to nodes in the separate subnets.

Goals

I want to install OpenShift with IPI on an on-premise platform (high priority for bare metal and vSphere) and I need to distribute my control plane and compute nodes across multiple subnets.

I want to use IPI automation but I will configure an external load balancer for the API and Ingress VIPs, instead of using the built-in keepalived/haproxy-based load balancer that come with the on-prem platforms.

Background, and strategic fit

Customers require using multiple logical availability zones to define their architecture and topology for their datacenter. OpenShift clusters are expected to fit in this architecture for the high availability and disaster recovery plans of their datacenters.

Customers want the benefits of IPI and automated installations (and avoid UPI) and at the same time when they expect high traffic in their workloads they will design their clusters with external load balancers that will have the VIPs of the OpenShift clusters.

Load balancers can distribute incoming traffic across multiple subnets, which is something our built-in load balancers aren't able to do and which represents a big limitation for the topologies customers are designing.

While this is possible with IPI AWS, this isn't available with on-premise platforms installed with IPI (for the control plane nodes specifically), and customers see this as a gap in OpenShift for on-premise platforms.

Functionalities per Epic

| Epic | Control Plane with Multiple Subnets | Compute with Multiple Subnets | Doesn't need external LB | Built-in LB |

|---|---|---|---|---|

| ✓ | ✓ | ✓ | ✓ | |

| ✓ | ✓ | ✓ | ✕ | |

| ✓ | ✓ | ✓ | ✓ | |

| ✓ | ✓ | ✓ | ✓ | |

| ✓ | ✓ | ✓ | ||

| ✓ | ✓ | ✓ | ✕ | |

| ✓ | ✓ | ✓ | ✕ | |

| ✓ | ✓ | ✓ | ✓ | |

| ✕ | ✓ | ✓ | ✓ | |

| ✕ | ✓ | ✓ | ✓ | |

| ✕ | ✓ | ✓ | ✓ |

Previous Work

Workers on separate subnets with IPI documentation

We can already deploy compute nodes on separate subnets by preventing the built-in LBs from running on the compute nodes. This is documented for bare metal only for the Remote Worker Nodes use case: https://docs.openshift.com/container-platform/4.11/installing/installing_bare_metal_ipi/ipi-install-installation-workflow.html#configure-network-components-to-run-on-the-control-plane_ipi-install-installation-workflow

This procedure works on vSphere too, albeit no QE CI and not documented.

External load balancer with IPI documentation

- Bare Metal: https://docs.openshift.com/container-platform/4.11/installing/installing_bare_metal_ipi/ipi-install-post-installation-configuration.html#nw-osp-configuring-external-load-balancer_ipi-install-post-installation-configuration

- vSphere: https://docs.openshift.com/container-platform/4.11/installing/installing_vsphere/installing-vsphere-installer-provisioned.html#nw-osp-configuring-external-load-balancer_installing-vsphere-installer-provisioned

Scenarios

- vSphere: I can define 3 or more networks in vSphere and distribute my masters and workers across them. I can configure an external load balancer for the VIPs.

- Bare metal: I can configure the IPI installer and the agent-based installer to place my control plane nodes and compute nodes on 3 or more subnets at installation time. I can configure an external load balancer for the VIPs.

Acceptance Criteria

- Can place compute nodes on multiple subnets with IPI installations

- Can place control plane nodes on multiple subnets with IPI installations

- Can configure external load balancers for clusters deployed with IPI with control plane and compute nodes on multiple subnets

- Can configure VIPs to in external load balancer routed to nodes on separate subnets and VLANs

- Documentation exists for all the above cases

Epic Goal

As an OpenShift infrastructure owner I need to deploy OCP on OpenStack with the installer-provisioned infrastructure workflow and configure my own load balancers

Why is this important?

Customers want to use their own load balancers and IPI comes with built-in LBs based in keepalived and haproxy.

Scenarios

- A large deployment routed across multiple failure domains without stretched L2 networks, would require to dynamically route the control plane VIP traffic through load-balancers capable of living in multiple L2.

- Customers who want to use their existing LB appliances for the control plane.

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- QE - must be testing a scenario where we disable the internal LB and setup an external LB and OCP deployment is running fine.

- Documentation - we need to document all the gotchas regarding this type of deployment, even the specifics about the load-balancer itself (routing policy, dynamic routing, etc)

- For Tech Preview, we won't require Fixed IPs. This is something targeted for 4.14.

Dependencies (internal and external)

- For GA, we'll need Fixed IPs, already WIP by vsphere: https://issues.redhat.com/browse/OCPBU-179

Previous Work:

vsphere has done the work already via https://issues.redhat.com/browse/SPLAT-409

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

Test the feature with e2e.

We basically want to check that the Keepalived & HAproxy pods don't run when an external LB is being deployed.

The test have to run on all on-prem platforms, for all the jobs, so we can test the default LB as well.

This is needed once the API patch for External LB has merged.

Epic Goal

As an OpenShift installation admin I want to use the Assisted Installer, ZTP and IPI installation workflows to deploy a cluster that has remote worker nodes in subnets different from the local subnet, while my VIPs with the built-in load balancing services (haproxy/keepalived).

While this request is most common with OpenShift on bare metal, any platform using the ingress operator will benefit from this enhancement.

Customers using platform none run external load balancers and they won't need this, this is specific for platforms deployed via AI, ZTP and IPI.

Why is this important?

Customers and partners want to install remote worker nodes on day1. Due to the built-in network services we provide with Assisted Installer, ZTP and IPI that manage the VIP for ingress, we need to ensure that they remain in the local subnet where the VIPs are configured.

Previous Work

The bare metal IPI tam added a workflow that allows to place the VIPs in the masters. While this isn't an ideal solution, this is the only option documented:

Configuring network components to run on the control plane

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>

- https://github.com/openshift/baremetal-runtimecfg/pull/224

- https://github.com/openshift/baremetal-runtimecfg/pull/207

- https://github.com/openshift/cluster-ingress-operator/pull/858

- https://github.com/openshift/machine-config-operator/pull/3540

- https://github.com/openshift/machine-config-operator/pull/3431

OCP/Telco Definition of Done

Epic Template descriptions and documentation.

<--- Cut-n-Paste the entire contents of this description into your new Epic --->

Epic Goal

- Review the OVN Interconnect proposal, figure out the work that needs to be done in ovn-kubernetes to be able to move to this new OVN architecture.

Why is this important?

OVN IC will be the model used in Hypershift.

Scenarios

- ...

Acceptance Criteria

- CI - MUST be running successfully with tests automated

- Release Technical Enablement - Provide necessary release enablement details and documents.

- ...

Dependencies (internal and external)

- ...

Previous Work (Optional):

- …

Open questions::

- …

Done Checklist

- CI - CI is running, tests are automated and merged.

- Release Enablement <link to Feature Enablement Presentation>

- DEV - Upstream code and tests merged: <link to meaningful PR or GitHub Issue>

- DEV - Upstream documentation merged: <link to meaningful PR or GitHub Issue>

- DEV - Downstream build attached to advisory: <link to errata>

- QE - Test plans in Polarion: <link or reference to Polarion>

- QE - Automated tests merged: <link or reference to automated tests>

- DOC - Downstream documentation merged: <link to meaningful PR>